Extracting information from technical drawings

Frank Weilandt (PhD)

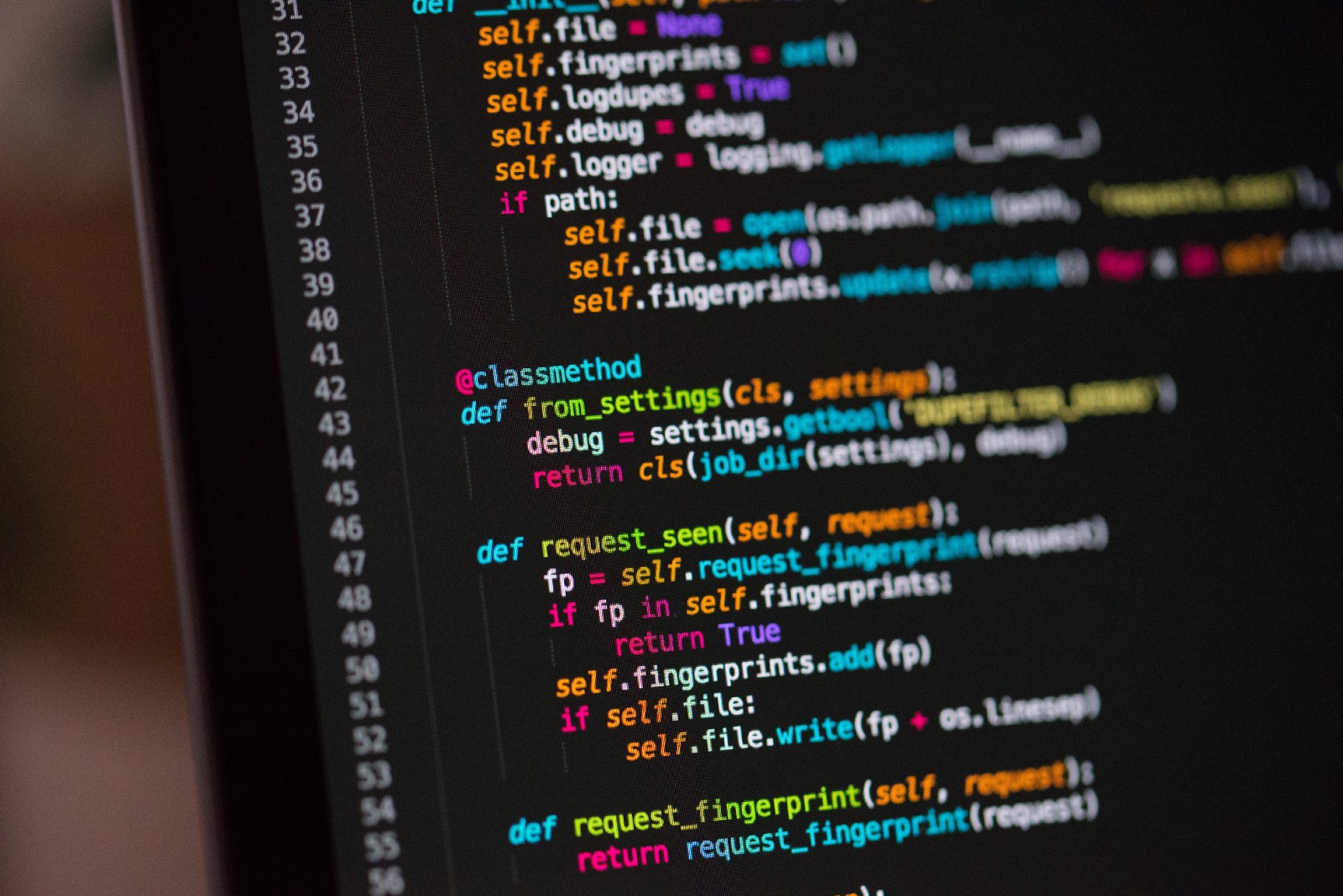

Did you ever need to combine data about an object from two different sources, say, images and text? We are often facing such challenges during our work at dida. Here we present an example from the realm of technical drawings. Such drawings are used in many fields for specialists to share information. They consist of drawings that follow very specific guidelines so that every specialist can understand what is depicted on them. Normally, technical drawings are given in formats that allow indexing, such as svg, html, dwg, dwf, etc. but many, especially older ones, only exist in image format (jpeg, png, bmp, etc.), for example from book scans. This kind of drawings is hard to access automatically which makes its use hard and time consuming. In this regard, automatic detection tools could be used to facilitate the search. In this blogpost, we will demonstrate how both traditional and deep-learning based computer vision techniques can be applied for information extraction from exploded-view drawings. We assume that such a drawing is given together with some textual information for each object on the drawing. The objects can be identified by numbers connected to them. Here is a rather simple example of such a drawing: An electric drill machine. There are three key components on each drawing: The numbers, the objects and the auxiliary lines. The auxiliary lines are used to connect the objects to the numbers. The task at hand will be to find all objects of a certain kind / class over a large number of drawings , e.g. the socket with number 653 in the image above appears in several drawings and even in drawings from other manufacturers. This is a typical classification task, but with a caveat: Since there is additional information for each object accessible through the numbers, we need to assign each number on the image to the corresponding object first. Next we describe this auxiliary task can be solved by using traditional computer vision techniques.

.jpg)