Pretraining for Remote Sensing

.jpg)

In this blog post I will describe a number of pretraining tasks one can use either separately or in combination to get good “starting” weights before you train a model on your actual labelled dataset.

Typically, remote sensing tasks come under the umbrella of semantic segmentation, so all the pretraining techniques described here are for tasks that output a prediction for each pixel and use a U-Net as the architecture.

Background

Remote sensing (analysis of satellite or aerial images) is a great application of deep learning because of the huge amount of data available. ESA’s archive of Sentinel imagery contains ~10PB for example, approximately 7000 times the size of ImageNet!

Unfortunately, there is a small catch here: labels for remote sensing images are often extremely difficult to produce. For example in our Convective Clouds detection project it took expert advice and the use of external radar data to perform labelling in many cases.

One way to take advantage of the enormous amount of unlabelled data is to pretrain on a task that we can easily generate labels for. Hopefully, by doing this our neural network model can learn features that are also useful for the main task that we are interested in. This approach is also known as transfer learning.

Image Inpainting

Here we randomly block out blocks of an image and try to predict their content.

I’ve included an example of the output of a model. This was trained with a combination of L1 and adversarial loss using 50 randomly sampled Sentinel 2 overland images The model uses all 13 channels for both output and input but I’ve only shown the RGB channels.

You can see that it performs better in some backgrounds to others. We could probably improve this by downloading even more data and by playing with the hyperparameters.

Road Segmentation

OpenStreetMap is an incredible resource of free, open source geospatial data. The Python library osmnx makes it very easy to download and start working with it.

Below you can see two examples of Sentinel 2 image tiles with roads printed on.

The disadvantage here is that OpenStreeMap's completeness varies significantly across the world. You should also be careful to remove tunnels from the dataset!

This is a difficult task to learn: the satellite images are cloudy, the roads typically have widths of under 10m (minimum sentinel 2 pixel size) and OpenStreetMap is not complete. In fact, there is a risk that we are overfitting to the roads that are present in OpenStreetMap rather than detecting roads in general.

LCLU Segmentation

The Copernicus land monitoring service provides 100m resolution raster-land cover/ land use (LCLU) data. We can train a model to perform a segmentation of this data.

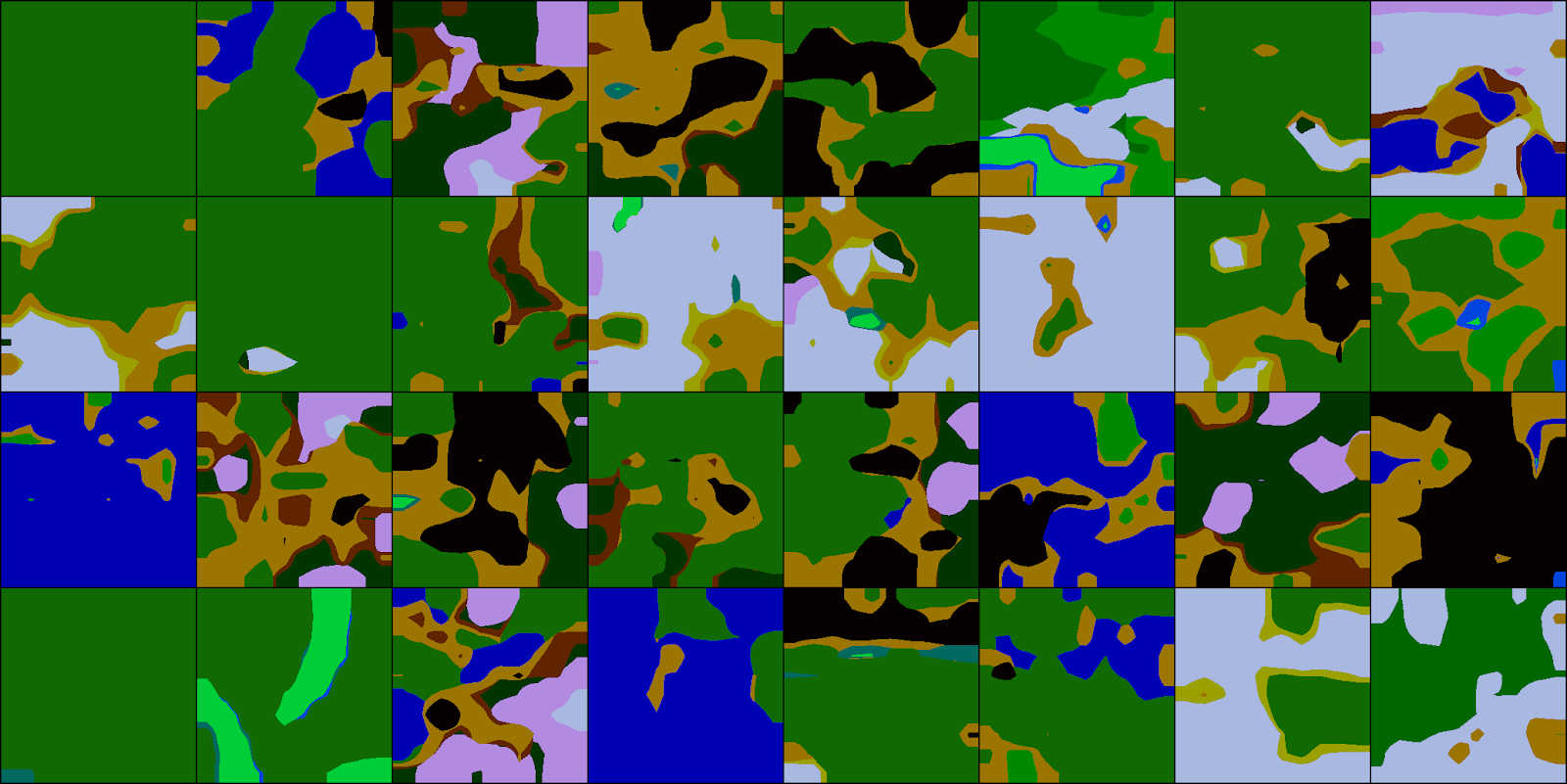

Below you can see input data, targets and output from the validation set of a model trained to predict land cover using Sentinel 1 data.

Cross Sensor Translation

Finally we can experiment with translation between Sentinel 1 and Sentinel 2 data using a CycleGAN architecture.

This is something we are currently working on, so I don’t have an example image to show yet. Hopefully, it will allow us to simultaneously pretrain Sentinel 1 and Sentinel 2 models.

Future work

The most important next step is to rigorously assess the effect of pretraining on these tasks on a real problem such as our ASMSpotter project.

In the future we would like to experiment with more tasks. We could also combine training on a large number of tasks to learn good starting weights that should generalise well to any task using OpenAI’s Reptile algorithm.

Keep an eye out for a future post containing systematic results for the effect of these pretraining tasks as well as any other updates!

Contact

If you would like to speak with us about this topic, please reach out and we will schedule an introductory meeting right away.