Image Captioning with Attention

One sees an image and easily tells what is happening in it because it is humans’ basic ability to grasp and describe details about an image by just having a glance. Can machines recognize different objects and their relationships in an image and describe them in a natural language just like humans do? This is the problem image captioning tries to solve. Image captioning is all about describing images in natural language (such as English), combining two core topics of artificial intelligence: computer vision and natural language processing. Image captioning is an incredible application of deep learning that evolved considerably in recent years. This article will provide a high-level overview of image captioning architecture and explore the attention mechanism – the most common approach proposed to solve this problem.

The most recent image captioning works have shown benefits in using a transformer-based approach, which is based solely on attention and learns the relationships between elements of a sequence without using recurrent or convolutional layers. We will not be considering transformer-based architectures here, instead we will focus only on the attention-based approach.

Background

The image captioning task is significantly more complex than the relatively well-studied object recognition or image classification, which have been a primary focus in the computer vision community. The image captioning frameworks not only must be powerful enough to solve the computer vision challenges of determining relevant objects in an image, but they are also required to be capable of capturing their actions, associated attributes, and relations to each other as well as compress this complex visual information into a descriptive language. Advances in deep neural network architectures for both image and language processing have made the image captioning problem approachable despite challenges and complexities.

How does it work?

An image caption generation model generally works as follows: it takes an image as input, identifies its relevant parts, and generates textual descriptions with grammatically meaningful sentences. You can see what the output will look like in the picture below. As we can see, the captions are quite accurate and grammatically correct. These captions are generated using an image captioning model with attention, which we will discuss below.

Encoder-decoder framework

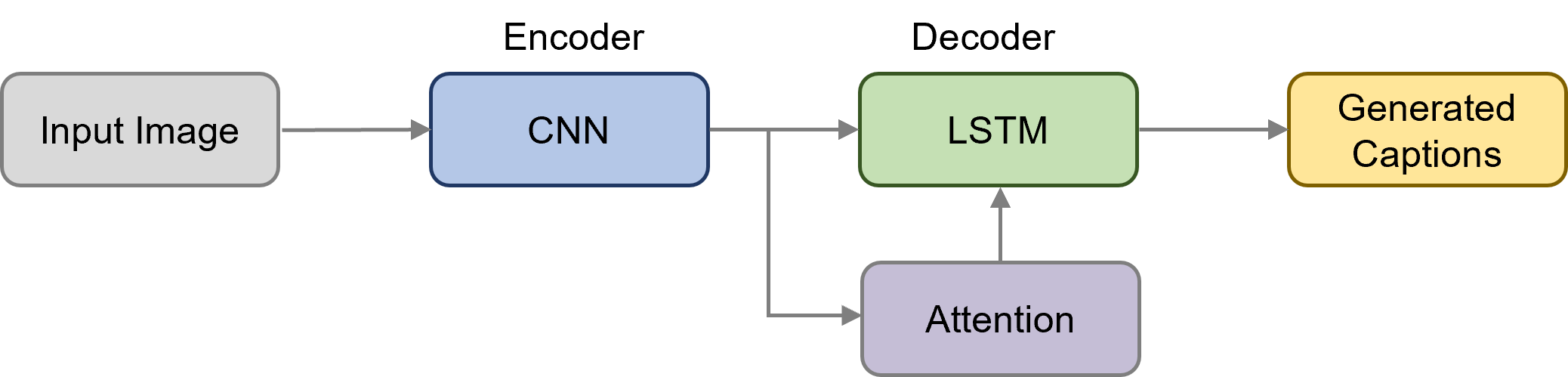

Most image captioning systems use an encoder-decoder structure that allows us to train the model in an end-to-end fashion. The encoder is an image feature extraction model and is typically represented by a CNN (Convolutional Neural Network). The decoder is a language model represented by LSTM (Long Short-Term Memory) (or any other Recurrent Neural Network based model).

In order to extract image features, we don't have to train a CNN model from scratch. Image caption generation methods use transfer learning to extract visual information based on pre-trained Convolutional Neural Network models. Pre-trained models are previously trained networks on the ILSVRC ImageNet challenge. Image classification models trained on the ImageNet dataset usually generalize well and can be effectively applied as generic image feature extraction for various tasks in computer vision. For this reason, it is a common approach to use pre-trained CNNs to obtain visual features for image captioning. Here are some popular models pre-trained on the ImageNet:

VGGNet

ResNet

Inception V3

Since those models are trained to classify objects in images, the classifier layer is skipped, and the feature map representation is extracted when applying for image captioning. We are not going into details about CNNs here. Check out this article if you want to learn how they work.

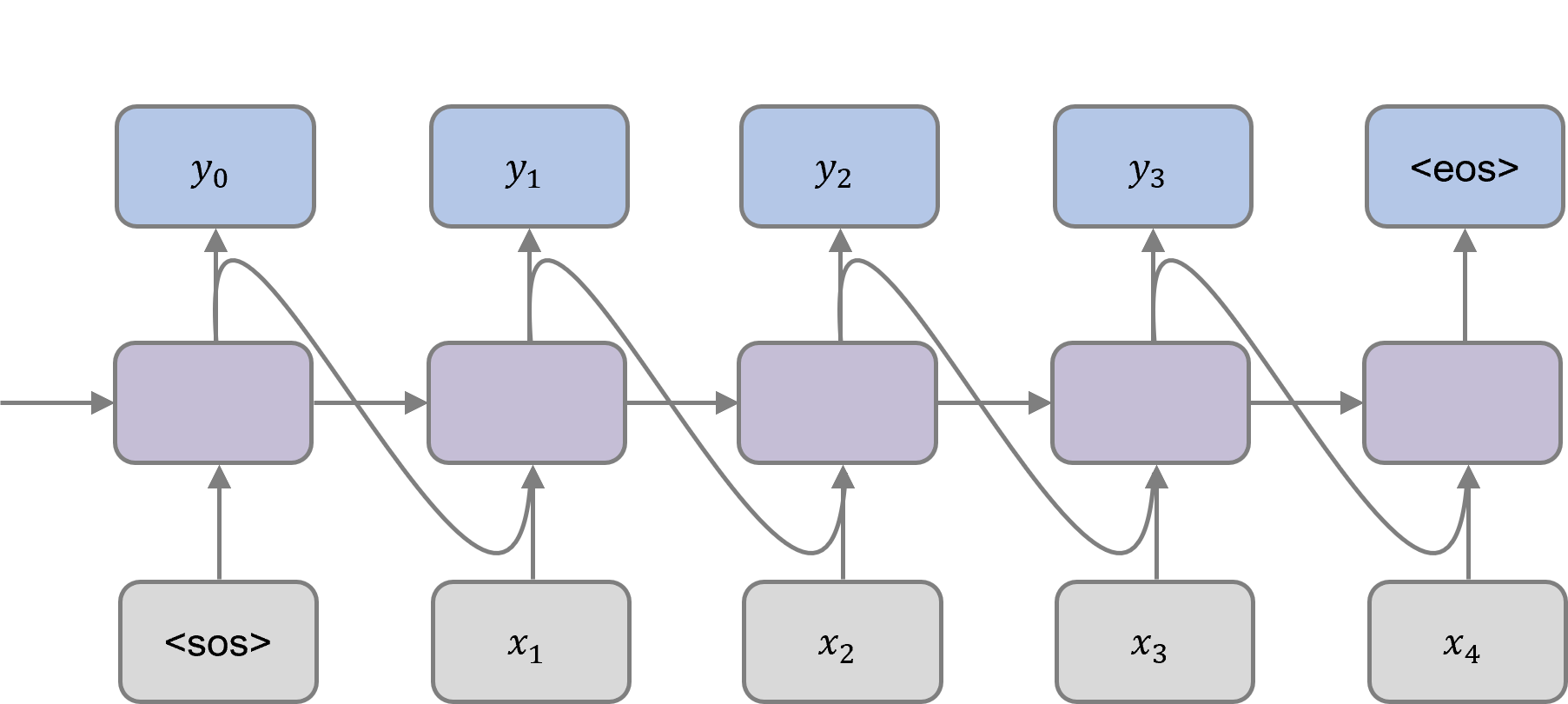

Image features in the form of a fixed-length vector are fed to the language decoder as input along with the special "<sos>" (start of sequence) token that indicates the beginning of the sequence. The decoder takes the hidden state from the previous time step and the previous predicted word at each time step to generate the output word for the current time step. This process continues until the "<eos>" (end of sequence) token is predicted.

Encoder-decoder with attention

Image captioning has been considerably enhanced with the introduction of attention – a mechanism that helps the algorithm focus on salient parts of the image, excluding redundant content. In simple words, attention is a weighted sum of encoder outputs. When attention is incorporated to the encoder-decoder framework, the CNN first processes the image and outputs a feature maps. Then the attention module takes those feature maps along with a hidden state and assigns a weight to each image pixel. The weights are then concatenated with the input word for that time step, and passed to the LSTM network. It allows the decoder to pay attention to the most relevant part of the image while generating each word of the output sequence. Check out this article to learn more about the attention mechanism and its implementation.

The visualization of the attention weights demonstrates which parts of the image the model is paying attention to when generating a particular word. In the following examples, the white highlights indicate the attended regions when the underlined words are being generated. For instance, in the upper left image, at the time step where the decoder needs to output the word “frisbee”, it will focus its attention on the frisbee in the image. Accordingly, when the word “woman” is being generated, the highlight would be around the woman in the image.

Conclusion

In this article, we examined the big picture of how the image caption generation pipeline works. In particular, we looked at the attention mechanism, which is one of the many methods used for this task. We saw how attention is used to dynamically focus on the various parts of the input image while the captions are being produced. Despite image captioning models having improved significantly over the last few years, it still remains a challenge to be solved.

Contact

If you would like to speak with us about this topic, please reach out and we will schedule an introductory meeting right away.