Reinforcement Learning with Random Time Horizons

by Enric Ribera Borrell, Lorenz Richter, Christof Schütte

Year:

2025

Publication:

International Conference on Machine Learning (ICML)

Abstract:

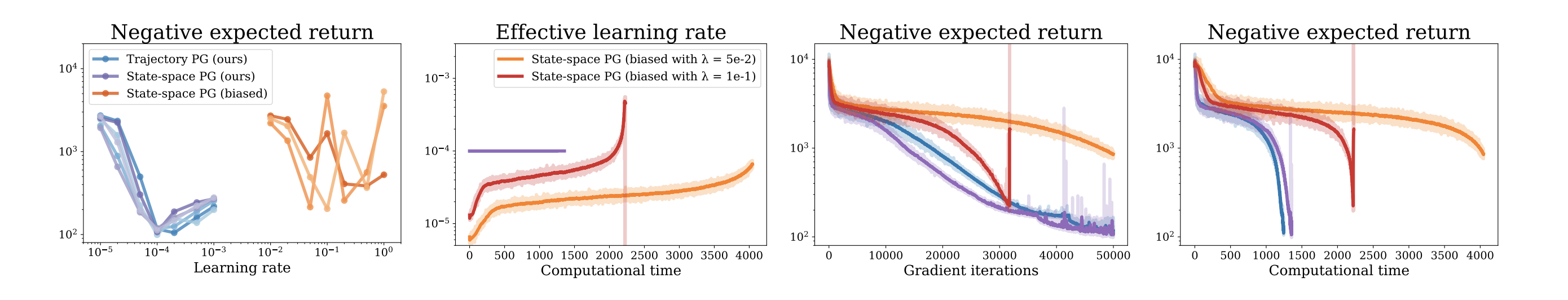

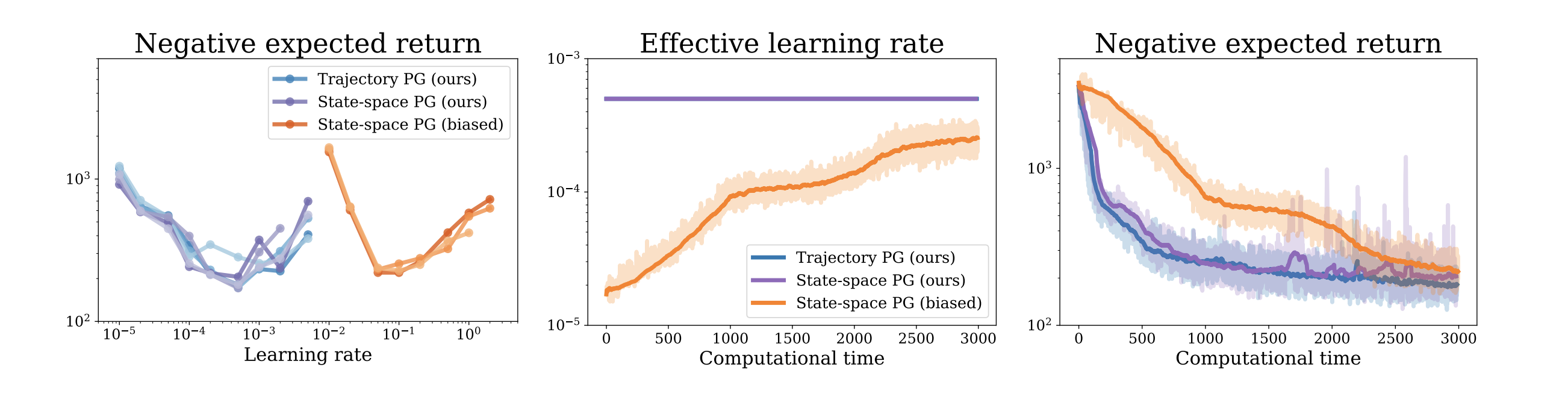

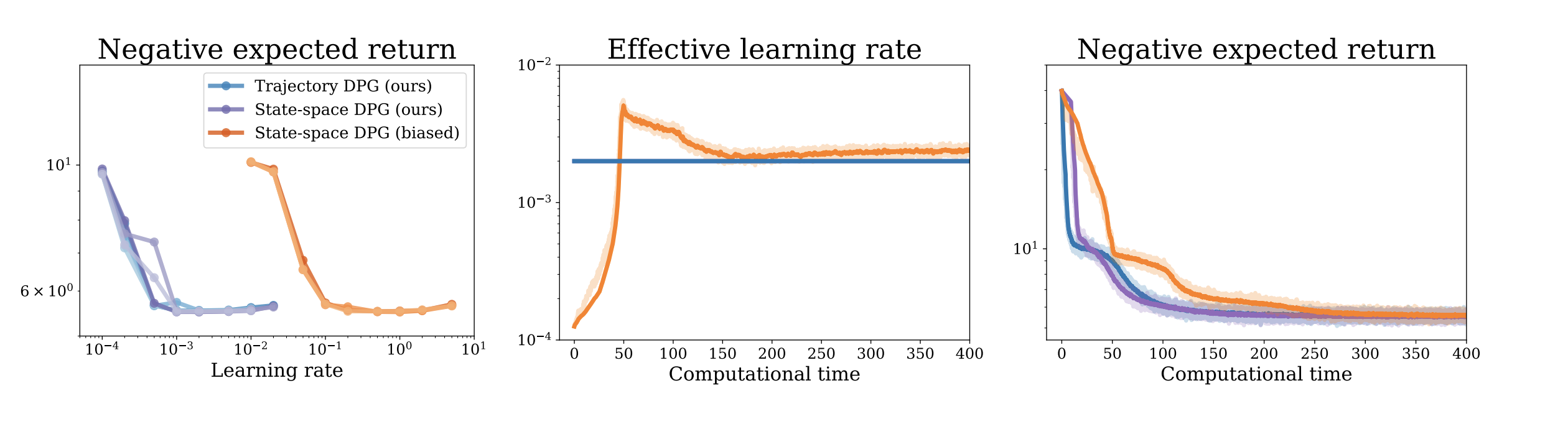

We extend the standard reinforcement learning framework to random time horizons. While the classical setting typically assumes finite and deterministic or infinite runtimes of trajectories, we argue that multiple real-world applications naturally exhibit random (potentially trajectory-dependent) stopping times. Since those stopping times typically depend on the policy, their randomness has an effect on policy gradient formulas, which we (mostly for the first time) derive rigorously in this work both for stochastic and deterministic policies. We present two complementary perspectives, trajectory or state-space based, and establish connections to optimal control theory. Our numerical experiments demonstrate that using the proposed formulas can significantly improve optimization convergence compared to traditional approaches.

Link:

Read the paperAdditional Information

Brief introduction of the dida co-author(s) and relevance for dida's ML developments.

Lorenz Richter (PhD)

With an original focus on stochastics and numerics (FU Berlin), the mathematician has been dealing with deep learning algorithms for some time now. Besides his interest in the theory, he has practically solved multiple data science problems in the last 10 years. Lorenz leads the machine learning team.